Continuing with Over 500,000+ Data Points for Bitcoin (BTC) Price Prediction

Using the Python program, the first method I tried was SVR (Support Vector Regression) for prediction. However… how many steps should I use for prediction? 🤔

Previously, I used a Raspberry Pi 4B (4GB RAM) for prediction, and… OH… 😩

I don’t even want to count the time again. Just imagine training a new model on a Raspberry Pi!

So, I switched to an AMD 16-core CPU with 8GB RAM running in a virtual machine to perform the prediction.

- 60 steps calculation: Took 7 hours 😵

- 120 steps: …Man… still running after 20 hours! 😫 Finally !!! 33 Hours

Do I need an M4 machine for this? 💻⚡

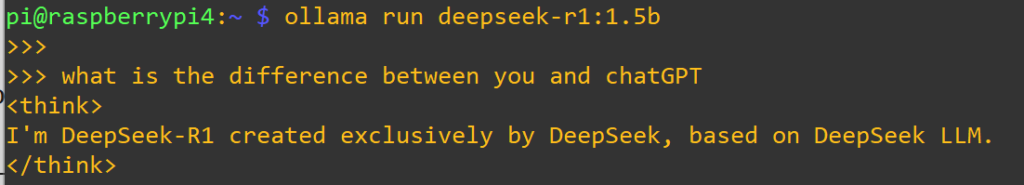

ChatGPT provided another approach.

OK, let’s test it… I’ll let you know how it goes! 🚀

🧪 Quick Example of More Time Steps Effect

| Time Step (X Length) | Predicted Accuracy | Notes |

|---|---|---|

| 30 | ⭐⭐⭐ | Quick but less accurate for long-term trends. |

| 60 | ⭐⭐⭐⭐ | Balanced context and performance. |

| 120 | ⭐⭐⭐⭐½ | Better for long-term trends but slower. |

| 240 | ⭐⭐ | Risk of overfitting and slower training. |

#SVR #Prediction #Computing #AI #Step #ChatGPT #Python #Bitcoin #crypto #Cryptocurrency #trading #price #virtualmachine #vm #raspberrypi #ram #CPU #CUDB #AMD #Nvidia